AI Ethics and Moral Imagination

AI ethics is not just about justifying what is right and wrong. It is equally about the ability to find creative solutions — through open dialogue — to the moral and technical challenges that contemporary AI presents. Inspired by philosophical pragmatism, this talk presents ethics as a form of moral problem-solving. The focus is not only on arguments and principles, but also on dialogue, exchange of perspectives, and creative moral imagination.

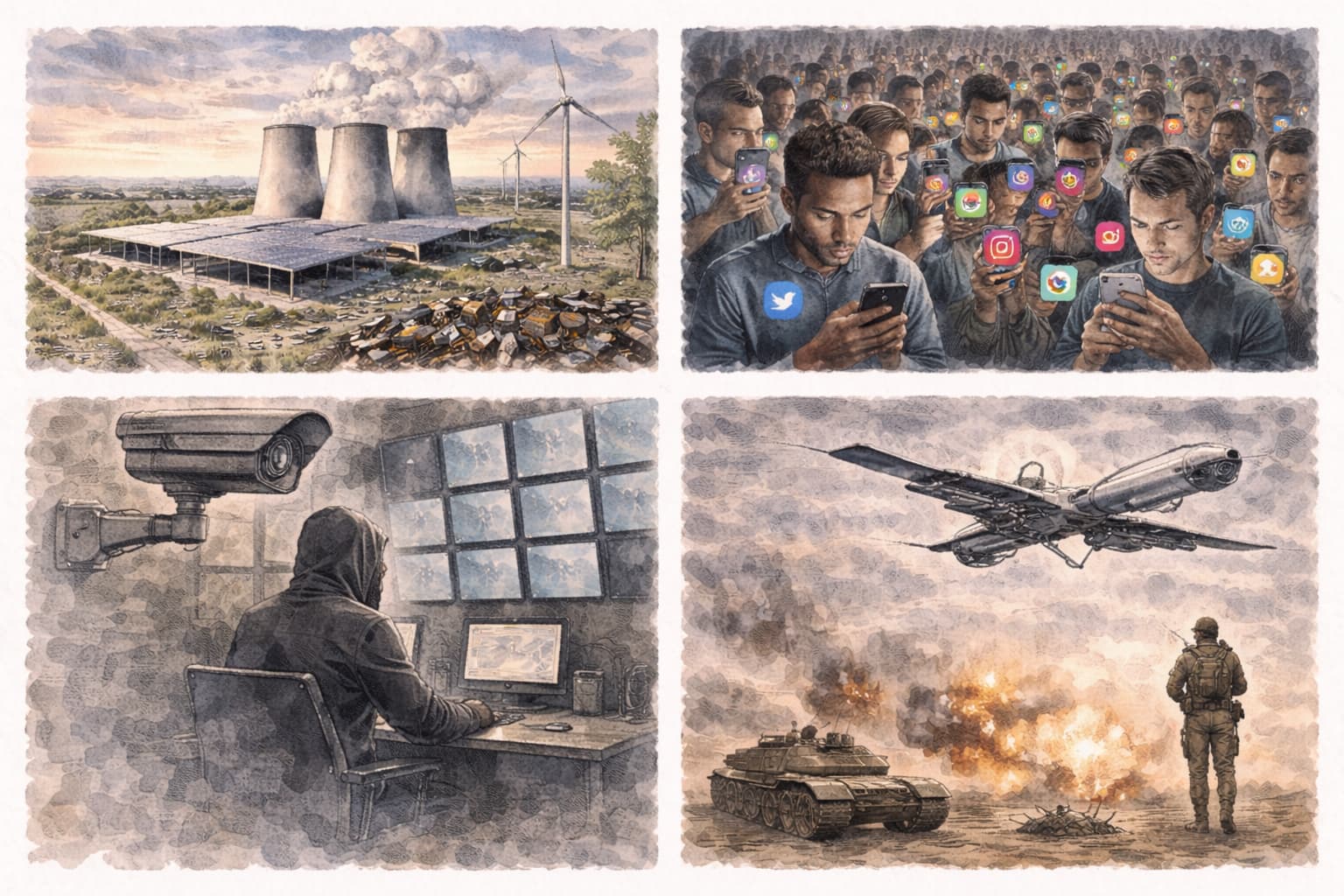

AI Challenges Our Moral Creativity

Artificial intelligence is not merely a technological breakthrough — it is an ethical test of strength across society. Its enormous energy consumption increases the climate footprint, while power concentrates among a few actors and challenges democratic control. At the same time, AI pushes boundaries around privacy, surveillance, and autonomy — and risks amplifying existing inequalities.

The technology also opens the door to misuse: from misinformation and identity theft to addictive design. Bio-AI in particular illustrates the duality — a tool that can both save lives and lower the threshold for developing biological weapons, increasing the risk of new man-made pandemics.

In the area of security, AI intensifies a global arms race where autonomous systems can potentially make decisions about life and death. In short, there is an urgent need for reflective dialogue, moral imagination, and nuanced judgement.

From Ethical Challenges to Moral Practice

After the overview of ethical challenges, we shift gears. From principle to practice.

We look closely at real cases. What went wrong — and why? Here moral imagination becomes concrete: third-party oversight, whistleblower schemes, and codes of conduct as tools that can actually make a difference. Not in theory. In practice.

Then we turn the lens inward. How vulnerable is your own organization? Which risks are already addressed in the EU AI Act — and which would have been avoided with genuine compliance? It is about distinguishing between paper and practice.

How can ethics be built in from the start — in DevOps, in continuous discovery, in the development logic itself? The point is simple: responsibility must be integrated into practice, not added on. Here, value sensitive design becomes a method, not an empty declaration of intent.