Responsible AI in Practice

As AI systems increasingly support decision-making, knowledge work, and software development, the question is no longer only what the technology can do — but also how it should be understood and how it can be used responsibly. In particular the so-called AI foundation models — large general-purpose AI systems trained on enormous amounts of data — raise new ethical questions about environmental impact, concentration of power, privacy violations, discrimination, uncertainty, risk, and organizational responsibility. The workshop introduces what these AI models are — and what they are not — and focuses on the ethical questions and moral dilemmas they raise. Drawing on the debate around probabilistic AI systems, we also discuss which organizational and management tools and practices can be brought into play when moral problems need to be identified, assessed, and addressed. Grounded in philosophy of science, AI ethics, and practical experience with AI systems, participants are presented with concrete ethical dilemmas and work in groups with different frameworks for AI governance. The workshop concludes with an open dialogue about the balance between responsible AI management and governance on one hand — and innovation, experimentation, and creative application on the other.

Understanding What AI Systems Actually Are

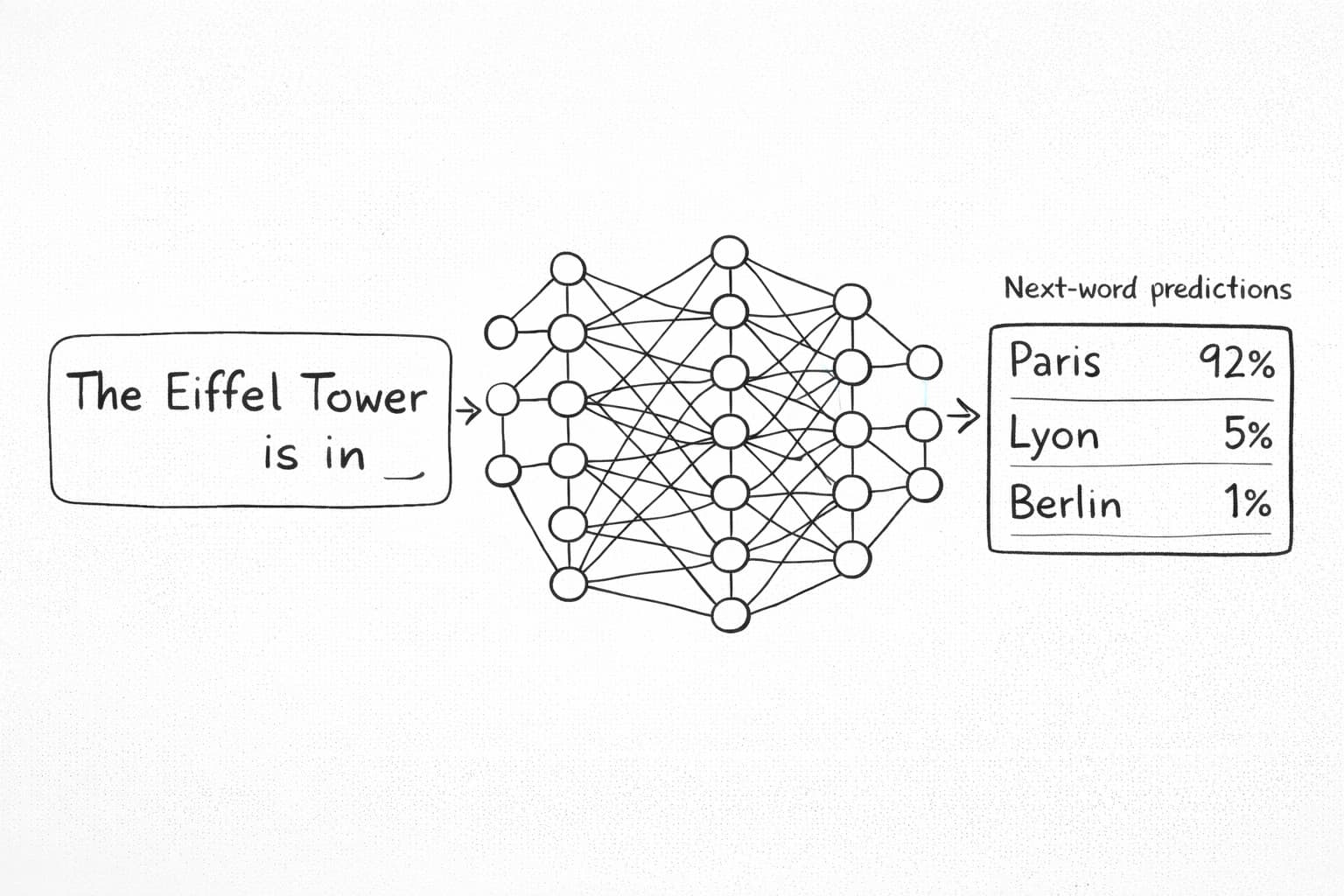

Before we can use AI responsibly, we must understand what we are working with. Large Language Models (LLMs) in particular are not databases of knowledge or systems with genuine understanding. They are probabilistic models trained to predict the next word in a text. Their answers are therefore statistically probable continuations of language patterns in training data. This means that when, for example, doctors, recruitment specialists, financial advisors, students, psychologists, or lawyers use AI answers, they may receive many correct and useful responses, while at the same time being easily misled by false but convincing formulations — since an AI answer is not a certain fact. It is a probable linguistic result generated from patterns in data. An AI answer may be the result of what are called hallucinations. The model can produce statements that sound plausible but are fabricated, imprecise, false, or discriminatory. This is a consequence of the model's probabilistic nature. It is not only the answer the model produces but also the things the model omits that can have serious consequences. Participants work with examples from AI development and knowledge work. The focus is practical: how to assess an AI answer, when to verify it, and how to design workflows that handle uncertainty explicitly.

AI Ethics in Everyday Technical Work

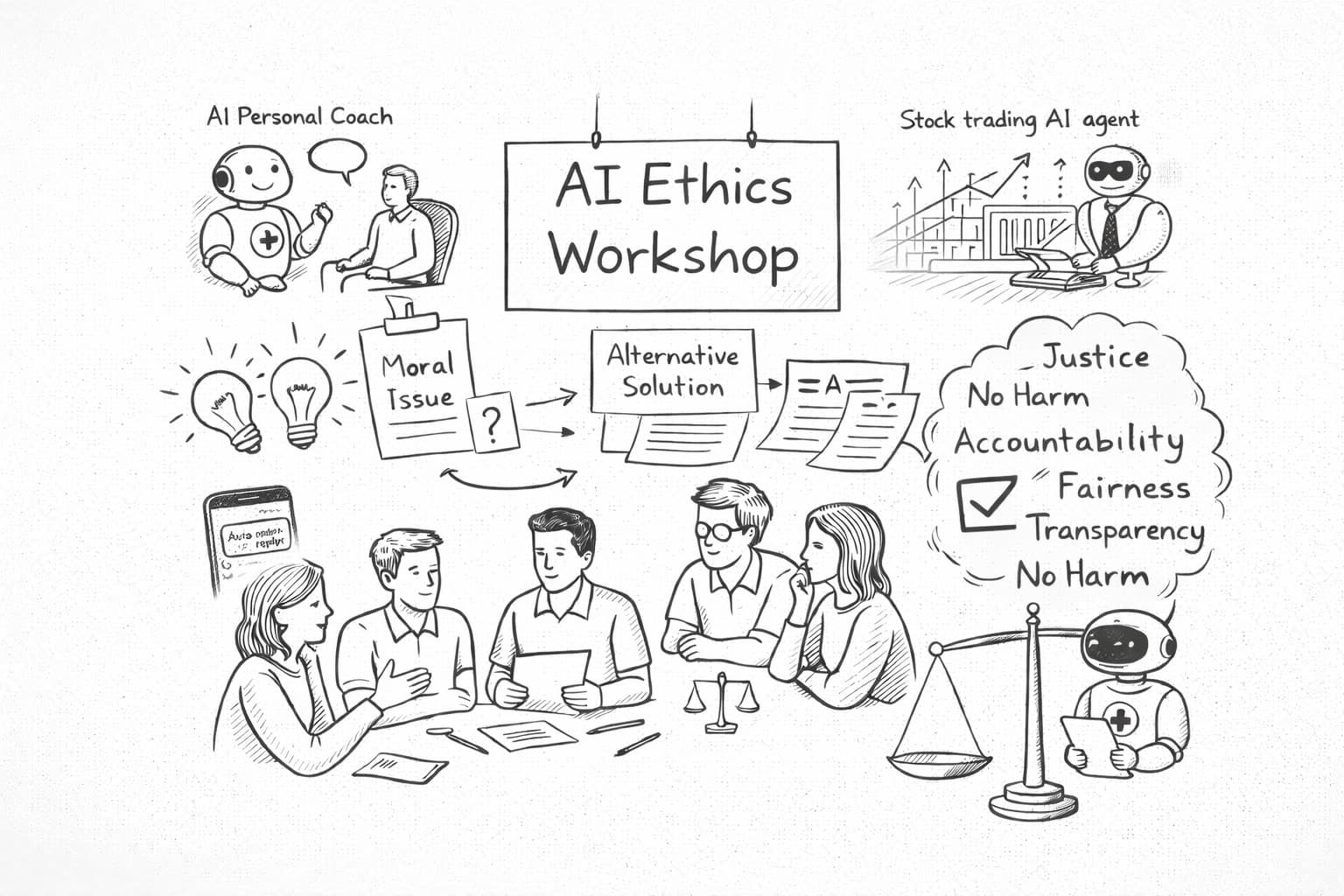

This workshop presents a pragmatic approach to ethical dilemmas in AI.

The starting point is that ethical challenges rarely arise as clear theoretical questions with well-defined options for action, but emerge in the midst of concrete design choices, product decisions, and technical compromises, or in an unforeseen use — perhaps misuse — of an AI-based app developed solely with good intentions.

Therefore we work not only with reasoning and arguments, but also with moral imagination and creative problem-solving: the ability to understand and define the moral problem, imagine alternative solutions, consequences, and responsible ways to balance values in the design of AI-based systems.

Participants are introduced to this approach through cases from real AI projects. We analyse concrete situations where teams must navigate between considerations such as fairness, transparency, innovation, safety, and responsibility.

The EU AI Act — Legislation as a Moral Tool

The EU AI Act affects every organization deploying AI in Europe. The task is to translate the rules into practical everyday work — from assessing risks and documenting solutions to ensuring transparency and continuously monitoring how the systems perform.

The Act's requirements around human oversight, transparency, and accountability are not just legal obligations — they reflect the same epistemic and ethical principles explored earlier in the workshop. Participants leave inspired and better equipped to work with AI governance and compliance in their own organization. The workshop provides insight into the AI Act's key requirements as well as examples of technical and organizational approaches to handling compliance in practice.