Professional Vibe Engineering

AI coding tools are already inside IDEs, pipelines, and daily workflows. Yet many teams use them in an improvised way — prompting, retrying, and hoping the output holds up. This workshop establishes a disciplined, scalable approach to AI-assisted software development that works in production environments, not just demos.

From Prompting to Professional AI Engineering

In many teams, AI adoption begins with excitement. A developer generates a function in seconds. Tests are scaffolded instantly. Documentation appears on demand. The productivity gains feel real. Then complexity enters the picture.

The generated code integrates imperfectly with an existing architecture. Subtle hallucinations creep into edge cases. Responsibility becomes blurred. When systems grow larger, ad-hoc prompting begins to show its limits.

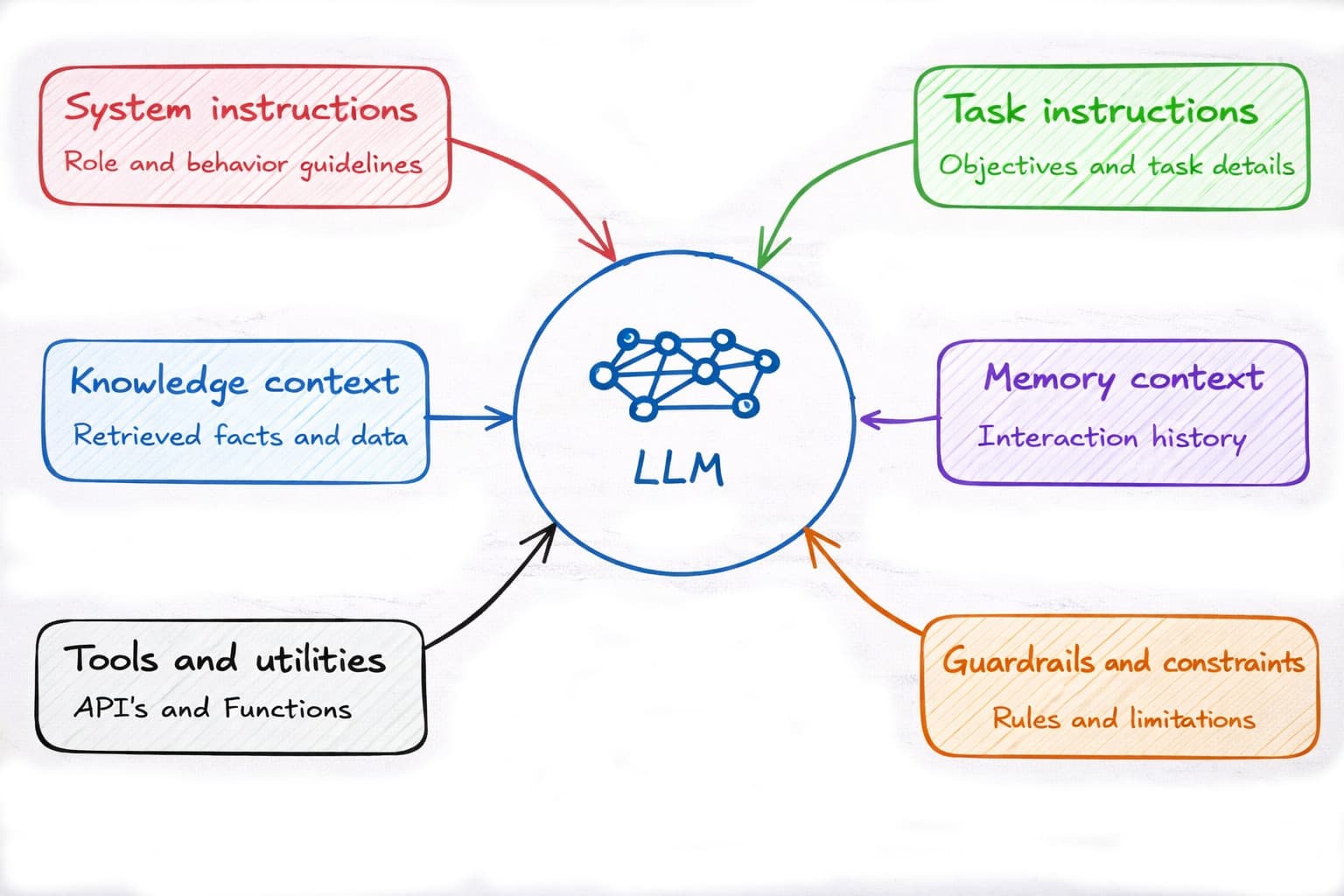

Professional vibe engineering addresses this transition point. Instead of relying on clever prompts, we design structured context setups with explicit system instructions, architectural boundaries, reusable prompt patterns, and well-defined output contracts. We treat prompts as engineering artifacts and design LLM interactions as workflows.

LLM Agent Systems That Scale Beyond Experiments

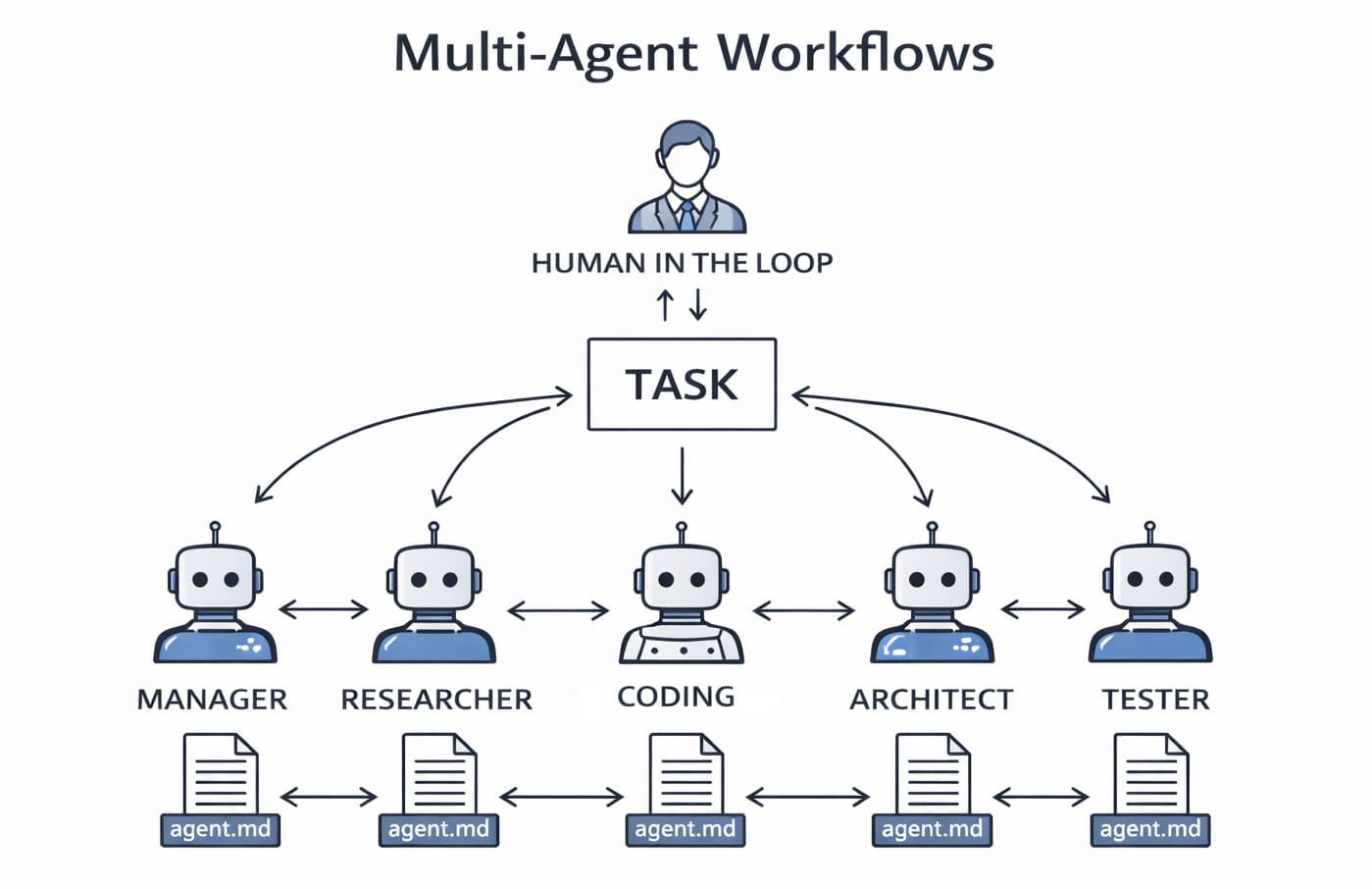

I teach how to design multi-agent workflows where architecture, implementation, review, testing, and refactoring are clearly separated into defined roles. Each agent operates within constraints. Each output is validated. Each step remains traceable and reviewable by humans.

The focus is not on hype or automation fantasies. It is on designing AI-supported workflows that respect software architecture, maintain developer ownership, and integrate cleanly into CI/CD pipelines with automated tests and static code analysis.

Guardrails, Quality Control, and Accountability

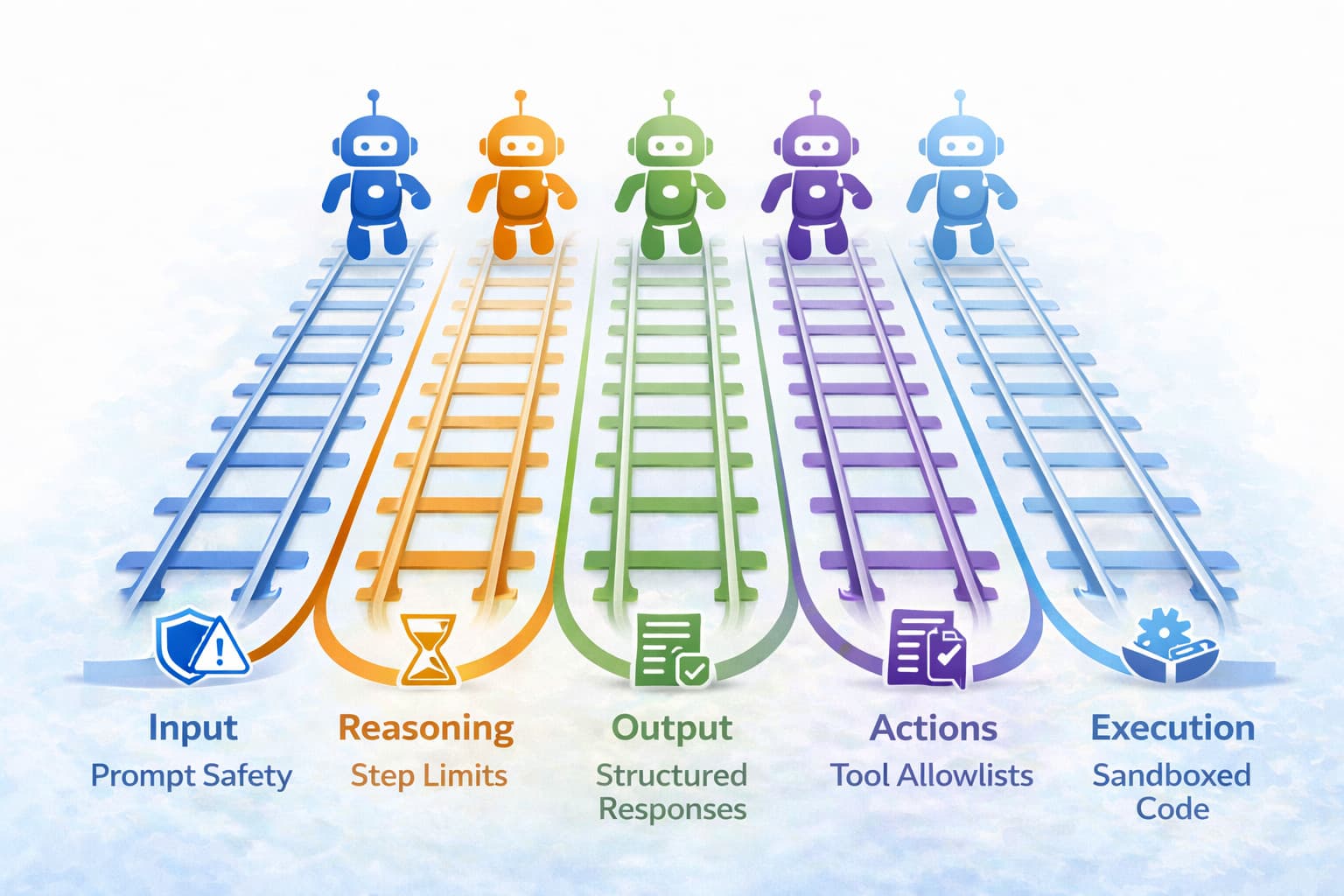

Large language models hallucinate. That is not a reason to avoid them — it is a reason to work with discipline around constraints and rules, the so-called guardrails. Professional vibe engineering is therefore not just about writing good prompts, but about establishing a structured process around AI-generated code. This includes structured output schemas, verification prompts, self-check loops, test generation patterns, and integration with static code analysis and other automated quality controls. AI can also play an active role in quality assurance through code review agents that analyse and comment on code before it even reaches humans.

AI-generated code is never accepted on aesthetics or simple manual tests alone. It is validated through measurable quality controls, automated tests, and systematic review processes. At the same time, humans remain a central part of quality assurance through human-in-the-loop practices, where developers assess, challenge, and approve the AI's suggestions.

DevSecValOps — Ethical Values in the Development Process

Here lies an important responsibility as well: not just for technical quality, but for the consequences systems can have for users and society. In software development we have seen similar evolutions before: from DevOps to DevSecOps, where security became an integrated part of the development process. Inspired by design traditions such as Value Sensitive Design, we can ask a similar question in AI development: What would DevSecValOps look like in your organization? In other words: how can ethical considerations — not only the organization's own values, but also regard for users, citizens, and society — become a real part of the team's daily practice? Not as a document from management, but as something that enters into design decisions, code reviews, test strategies, and the guardrails that shape the use of AI tools.

From Workshop to Practice — Your Next Steps with AI

A workshop in professional vibe engineering can serve as a kick-off for your team's approach to AI-assisted development. During the day we work with concrete techniques and establish a shared understanding of what AI can — and cannot — realistically do in your context.

The workshop gives you a common language and a set of principles that the team can build on. It can form the basis for your own guidelines for AI in development, quality requirements for AI-generated code, or an internal strategy for using LLM tools and sharing agent workflows across the organization.

Whether you are just getting started with AI coding tools or already working with them daily, the workshop creates a common foundation. The team gets space to reflect and make deliberate choices about how AI should interact with your existing practices. The goal is a responsible development process where AI is used systematically to deliver real value, with high quality, accountability, and more room for innovation.