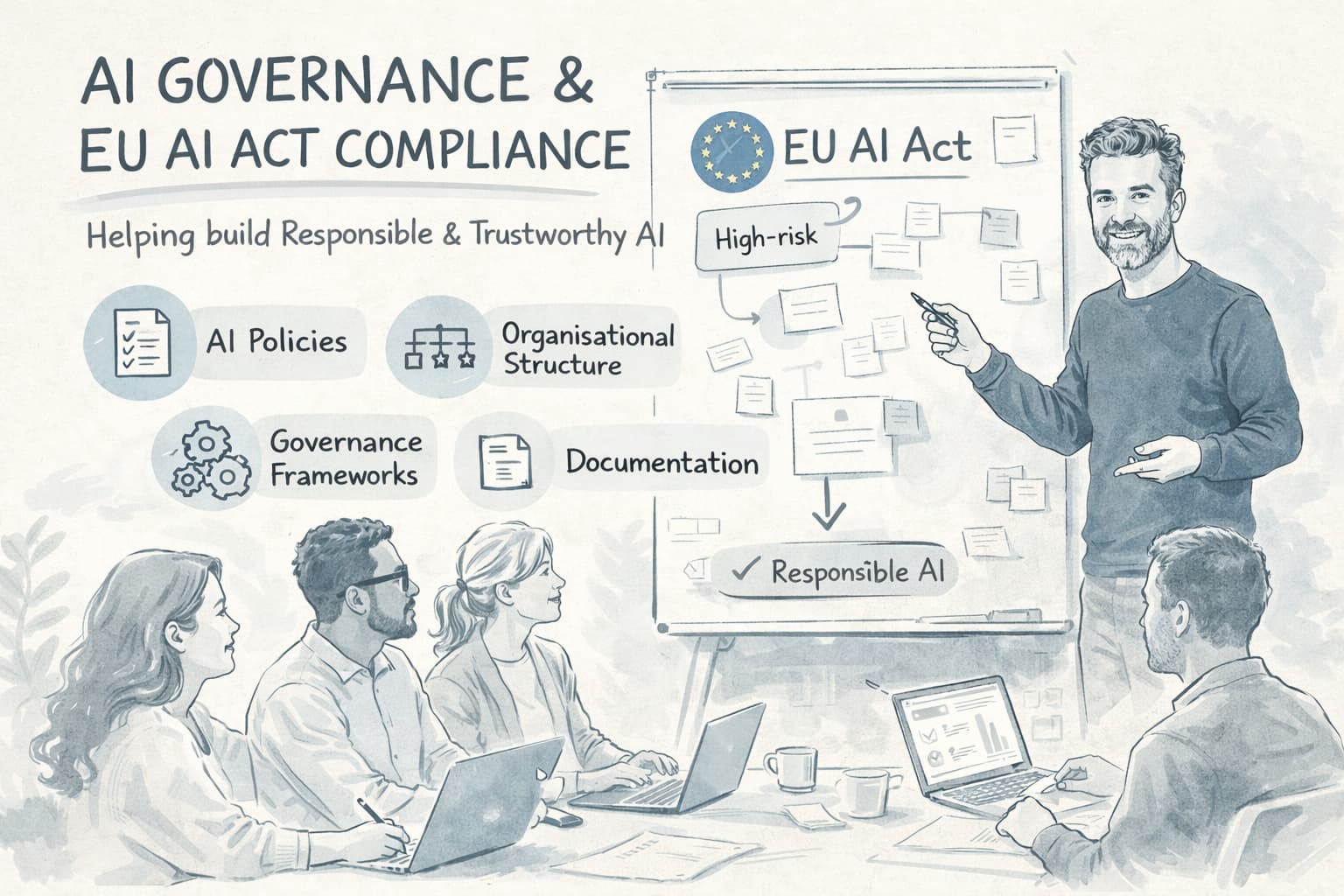

AI Governance

I help organizations get a better grip on their use of AI — so new solutions can be developed and adopted with a clear understanding of risks, responsibilities, and applicable requirements. The goal is to create overview and direction, making it easier to assess where and how AI delivers value.

EU AI Act Readiness

The EU AI Act introduces risk-based obligations that affect how you build, test, deploy, and monitor AI systems. I help teams understand which of their AI use cases fall into high-risk categories, what technical and organizational requirements apply, and what concrete steps are needed to achieve and maintain compliance.

This is not a legal opinion — it is practical engineering guidance. I translate regulatory requirements into technical specifications: documentation standards, risk assessment processes, human oversight mechanisms, and the monitoring infrastructure needed to demonstrate ongoing compliance.

Responsible AI in Practice

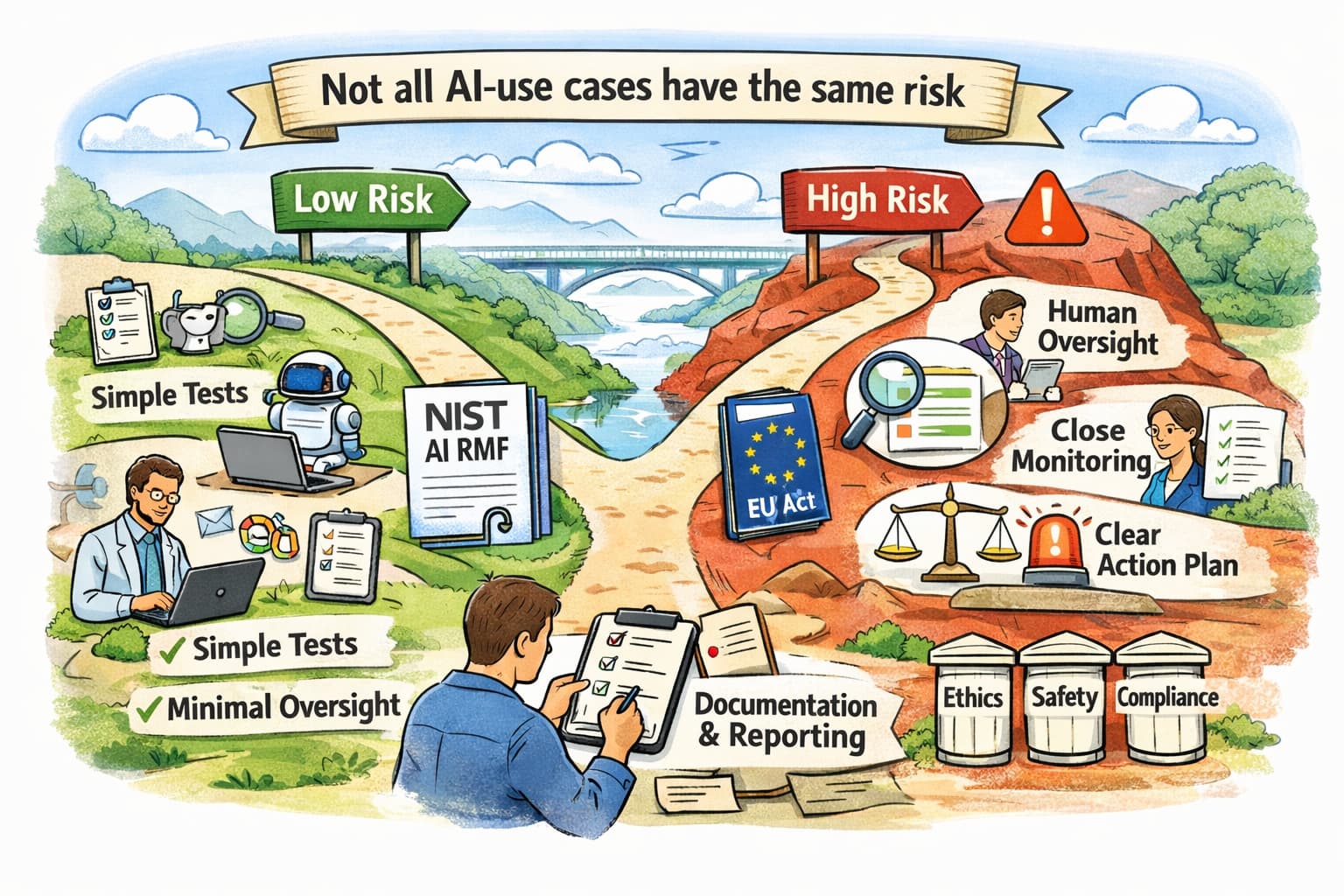

Not all AI use cases carry the same risk. I help organizations get a better overview of where extra attention may be needed — drawing on the NIST AI Risk Management Framework and the EU AI Act.

I help identify which types of technical tests make sense — for example regarding biases in data or model behaviour — as well as what documentation is required and how it can be integrated into the development workflow.

For higher-risk solutions, I can also help clarify which additional measures are often relevant. This may include the need for human involvement, closer follow-up, or clear agreements on what to do if something goes wrong.

Organizational Governance Structures

Governance only works when it is embedded in how teams actually operate. I help design AI governance structures that fit your organization — review boards, approval workflows, documentation standards, and audit trails — without creating bureaucratic overhead that kills innovation.

This includes defining clear ownership for AI decisions, establishing feedback loops between governance and engineering teams, and building templates and tooling that make compliance a natural part of the development workflow rather than an afterthought.